Language models have an uncanny ability to generate images in one-shot from natural language. Given a detailed prompt, you can get a close approximation to any artist, theme, or genre you like. I do find it amazing that, even though language is not the substrate through which paintings are created or reasoned about, a language model can somewaht faithfully reproduce images from text. While it’s technically interesting, though, I find it quite unsatisfying from an artistic view. I have a hunch that some of my dissatisfaction comes from the immediacy of the result, and the way that language models derivatively reproduce concepts.

To test if that’s the source of my dissatisfaction, I’ve built an app which produces LLM-generated images iteratively, rather than in one-shot. A gallery of outputs is available here. While doing so, I found an interesting allegory about the fragility of language model generated artefacts, in how they can collapse into unrecoverable messes, and that the mess can be tracked back to a couple of obvious bad strokes. A phenomenon which is mirrored strikingly well in my day-to-day experiences as a software engineer reviewing LLM generated code.

One of my favourite books is Tolstoy’s What Is Art. In it, he argues that art should be accessible, and that high-art is bad art because it is exclusive, and incomprehensible unless you’ve had an education privileged enough to understand it. For art to be good, Tolstoy argues, it must be accessible, and the artist must sincerely convey a unifying moral theme to the audience [1]. I don’t necessarily agree with everything Tolstoy writes in What is Art, but I think it’s one useful perspective from which to consider art.

While a few NVidia H100s in a datacenter summoning a picture of a Chagall-inspired Goat probably does satisfy Tolstoy’s accessibility requirement, it doesn’t satisfy the sincerity angle to my liking. Meanwhile, Chagall himeself painting I and the Village satisfies Tolstoy’s need for sincerity, but misses out on the accessibility side of things (most people’s first impression of a Chagall is “what even is that?”).

I wanted to know if I could produce something that keeps the accessibility of language-model generation, while introducing some form of sincerity. Rather than one-shotting, I wanted to know what happens if language-models are asked to create images through a more human process. By applying strokes one at a time, and spending time during the process considering the piece & progress towards its objectives. I want to know if they produce something that’s more earnest and artistically satisfying under those conditions.

The application I’ve built accepts a few CLI arguments, from which it generates a painting concept, then passes that and the current canvas into a vision language model (VLM). It asks the VLM to consider what the next stroke should be, and applies that to the canvas. I invoke the paint-procedure in a repeated loop to gradually build up the painting, alongside all of the reasoning behind each stroke. Eventually the VLM decides that the painting is “complete” (or it reaches some predetermined maximum number of strokes).

Here’s Claude Opus 4.7 constructing a Chagall inspired Russian Village under moonlight iteratively.

I also built a companion website, which lets you step through the painting step-by-step to see what the language model was considering during each stroke. Here’s Claude Opus 4.6’s attempt at a patchwork elephant in the style of a children’s book illustration. Push the “play” button at the top to see it progress. The reasoning traces are displayed on each iteration on the right-hand panel. At stroke 285, you’ll see Claude’s reasoning “This iteration focuses on the two most impactful elements: the bold elephant silhouette outline and the expressive eye” as it applies a glassy eye to the piece.

Some select results

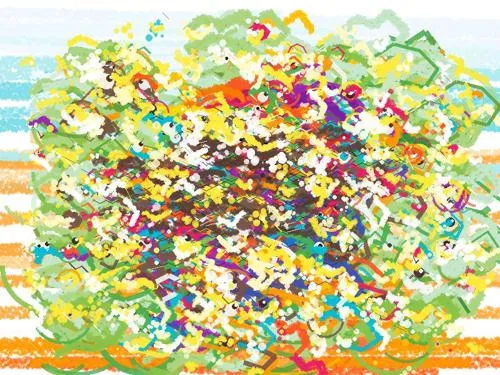

The quality of the output is… mixed and spiky. Usually it depends on the size of the model + context window. Small models running locally tend towards unrecognisable scribbles. Meanwhile frontier models trend towards recognisable images matching the subject matter. Below, four variants of Claude attempt to produce Patchwork Elephant images:

Links to the viewer site for each respective piece:

- Claude Opus 4.6 (large model)

- Claude Opus 4.7 (large model)

- Claude Sonnet 4.6 (medium model)

- Claude Sonnet 4.6 (medium model)

If you squint and you’re generous, you can see themes or inspirations in the produced media. It’s a visual way to see that, LLM based task completion suffers from a fallacy-of-composition type effect, where they’re exceptional at one-shotting a task, but they’re relatively much worse at performing the exact same task iteratively in small chunks.

The elephants above are produced by Anthropic’s Claude models, all of which are close to the frontier of current capabilities. Mistral have a year-old model mistral-large available, and the performance difference is noticable, as shown below.

Mistral’s model is weak when I ask it to proudce in batches of five strokes. When it’s given batches of 50 strokes, it makes something more interesting, and at least directionally-correct for a patchwork elephant. This is my favourite result from the project, because although it’s abstract, it manages to capture the limitations of the medium, while still representing the subject matter. It’s the closes thing to an earnest & sincere piece produced by the project.

I’ve built the front-end app so that humans and language models can interact with it to produce their own images. If you’re a human, go to the drawing page. Alternatively, point your browser-enabled language model of choice at the API Docs, and ask it to produce whatever you like.

It takes less effort to destroy something good than it took to build it in the first instance

My day-to-day work is in software engineering. While I’m still writing a lot of code by hand, I’m also reading a lot of LLM generated code. Between my own and my colleagues LLM-incanted code, something I’ve noticed about apps built with LLM assistance is that they’ve got a kind of fragility. It’s one which I couldn’t properly pinpoint or articulate until working on this project.

The painting app produces a visual for each stroke, so you can scrobble back and forward through the process, to see the paintings come into existence over time. You can see promising results emerging from the VLM early on, before vanishing beneath a catastrophic failure - usually applied in a single stroke. This has meant that a few times, I’ve been excited that they’re producing something worth looking at. But consistently, the VLM irreversibly destroys the painting, then takes increasingly destructive steps to repair its mistakes.

You can see this in Claude 4.6 creating a children’s book illustration. At around 300 strokes, the painting looks recognisable as an abstract digital illustration of a patchwork elephant in a jungle (not what was asked of it, but interesting nonetheless).

Then over the following 150 strokes, it starts to add fine-detail. VLMs are still poor at spatial reasoning, so strokes which would require fine-motor skills from a human are generally garbage from a VLM. At first, it adds a black squiggle, creating the outline of a haunted face. Then it adds a monkey with dozens of eyes, and a parrot whose body is entirely discontinuous.

While this creates some funny results, I think it brings a visual metaphor to the previously inarticulable fragility I see in codebases & other language model produced artefacts. The models are competent when working on broad-strokes, but they struggle in iteratively applying fine-detail changes (especially near the frontier of their capabilities), where they can be more destructive than helpful.

In both the paintings & codebases, at any given iteration, the language model only sees a small snapshot of the project. It may have access to some hints from previous commits or strategy documents, but since it’s limited to achieving its goal within its context window, they rush towards implementing whatever solves the current problem.

I see this phenomenon a lot PRs for the applications I maintain & contribute to. Lots of good-quality code will go into a repository, but one poorly thought out feature or commit can derail quality across the entire application. Once a repository transitions beyond its fragile state into the broken structure, it’s incredibly difficult to recover it, and get it back to a maintainable state. In most software projects, like in these generated images, new content is mostly additive. They can rarely be taken away or significantly re-architected after release.

Having said that, I do think that this fragility, and the visualisations of it on liamlaverty.com/paint-by-language-model might count as a form of art according to Tolstoy’s What is Art. Watching the VLM struggle to produce a Pelican riding a bicycle is visually interesting; it’s accessible; and it’s certainly not high art.

Personally, I can project meaning onto it, that struggle of conservation over progress is a universal phenomenon. I don’t think that these pieces bring the sincerity I was looking for though. Everything in the gallery still feels like a soulless derivative digital illustration, rather than an art piece. Maybe it’s just my prompts? The results I was able to produce remind me of Anthropic’s cleanroom implementation of a C compiler. The result is kind of directionally correct, but not what I was looking for.

Feel free to point your language model at the drawing canvas, and let me know if you get better results: https://www.liamlaverty.com/paint-by-language-model/draw

- A technical write-up of the app is available here: https://www.etive-mor.com/blog/how-the-language-model-painting-app-is-built/

- The code can be found on my github at: https://github.com/liamlaverty/paint-by-language-model

Footnotes

[1] It’s fairly ironic that Tolstoy wrote this treatise, given that he also wrote War and Peace - an artwork which nobody has ever described as “accessible” - but he acknowledges this irony, describing War and Peace as “Bad Art”.